Penmachine

23 April 2010

What Darwin didn't get wrong

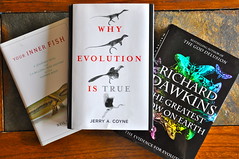

Last October I reviewed three books about evolution: Neil Shubin's Your Inner Fish, Jerry Coyne's Why Evolution is True, and Richard Dawkins's The Greatest Show on Earth: The Evidence for Evolution. It was a long review, but pretty good, I think.

There's another long multi-book review just published too. This one's written by the above-mentioned Jerry Coyne (who will be in Vancouver for a talk on fruit flies this weekend), and it covers both Dawkins's book and a newer one, What Darwin Got Wrong, by Jerry Fodor and Massimo Piattelli-Palmarini, which has been getting some press.

Darwin got a lot of things wrong, of course. There were a lot of things he didn't know, and couldn't know, about Earth and life on it—how old the planet actually is (4.6 billion years), that the continents move, that genes exist and are made of DNA, the very existence of radioactivity or of the huge varieties of fossils discovered since the mid-19th century.

It took decades to confirm, but Darwin was fundamentally right about evolution by natural selection. Yet that's where Fodor and Piattellii-Palmarini think he was wrong. Dawkins (and Coyne) disagree, siding with Darwin—as well as almost all the biologists working today or over at least the past 80 years (though apparently not Piattellii-Palmarini).

I'd encourage you to read the whole review at The Nation, but to sum up Coyne's (and others') analysis of What Darwin Got Wrong, Fodor (a philosopher) and Piattelli-Palmarini (a molecular biologist and cognitive scientist) seem to base their argument on, of all things, word games. They don't offer religious or contrary scientific arguments, nor do they dispute that evolution happens, just that natural selection, as an idea, is somehow a logical fallacy.

Here's how Coyne tries to digest it:

If you translate [Fodor and Piattelli-Palmarini's core argument] into layman's English, here's what it says: "Since it's impossible to figure out exactly which changes in organisms occur via direct selection and which are byproducts, natural selection can't operate." Clearly, [they] are confusing our ability to understand how a process operates with whether it operates. It's like saying that because we don't understand how gravity works, things don't fall.

I've read some excerpts of the the book, and it also appears to be laden with eumerdification: writing so dense and jargon-filled it seems to be that way to obscure rather than clarify. I suspect Fodor and Piattelli-Palmarini might have been so clever and convoluted in their writing that they even fooled themselves. That's a pity, because on the face of it, their book might have been a valuable exercise, but instead it looks like a waste of time.

Coyne, by the way, really likes Dawkins's book, probably more than I did. I certainly think it's a more worthwhile and far more comprehensible read.

Labels: books, controversy, darwin, evolution, review, science

08 February 2010

Choosing disposable books

For Christmas, my longtime friend Sebastien bought me an Amazon Kindle ebook reader. It's been great—while it has its flaws, it's a convenient and non-fatiguing way to read electronic documents, much more pleasant than the backlit screens of my laptop or iPhone (or, probably, the iPad). But I find it has also influenced my reading choices in an interesting way.

I first read a few ebooks that I had kicking around on my hard drive, mostly in plain-text format. I honestly don't remember where I got them, since I've had them so long. They're almost all science fiction titles, and judging by the oddball typos, most of them were obviously illegitimately scanned and OCRed years ago. But the Kindle does a good job with plain text, so I was impressed.

Next, I moved on to buying a few books at Amazon's Kindle Store. And it's the store that altered my choices. So far I've only bought three ebooks there, but the ones I've sampled without buying have been similar, and uncharacteristic for me.

Kindle books, like most ebooks these days, are locked down by DRM, making then significantly less portable and shareable than plain-text or other open formats, or than traditional paper books, and more likely to suffer digital rot, likely making them inaccessible years down the line. So the ebooks I have bought and read aren't the type I would previously have kept on my bookshelf. All of them, oddly enough, have been memoirs, not a genre I've previously chosen much:

- Infidel by Ayaan Hirsi Ali

- Tokyo Vice by Jake Adelstein

- American on Purpose by Craig Ferguson

I recommend all three, by the way, though Infidel is the best if you choose just one. But I consider memoirs generally disposable: I can read them once and not have much interest in re-reading them in the future. Maybe that's why my mom has always been a reader of memoirs and biographies. For decades, she has picked them up second-hand and breezed through them in a few days.

My gut feeling is that DRM-protected ebooks should cost less than they do: $5 to $7 feels about right, while the current $11 to $15 range for many mainstream titles (like the three I read) is too much—though I might regularly pay the higher price for unlocked ebooks. I don't think I'm alone in this: notice that many of Amazon's Kindle bestsellers are in the cheaper price range. Also notice that many of those books are old enough to be public domain, so no one has to pay the authors anymore. You can even get them for free, and unlocked, elsewhere.

Ebook prices can be more flexible than traditional hard-copy paper book prices, though. Publishers seem to want to charge $15 and up for in-demand new titles, and then lower prices to pick up more price-sensitive readers later—and they seem willing to fight to be able to do that. I'm willing to wait, so I guess that sort of arrangement would be okay with me.

I'd still prefer they ditched the DRM. And I'd still pay a bit more for that if they did.

Labels: amazon, books, drm, ebooks, gadgets, money

27 December 2009

Books old and new

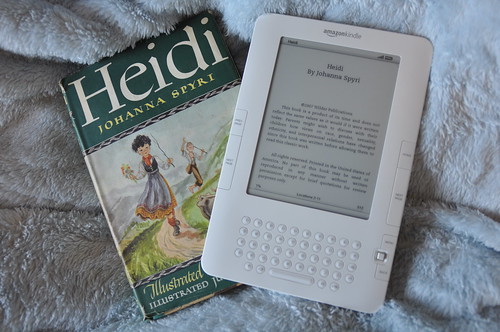

When my mother was a little girl, she received a copy of the classic children's book Heidi, printed in 1945. This year, she dug that same copy out and gave it to my older daughter M as a Christmas present.

One gift I received this year from my friend Sebastien was an Amazon Kindle e-book reader. You can, of course, get Heidi for it. The two make an interesting contrast:

The chances that my Kindle will still be around and working in 65 years, to give away to a grandchild? Virtually zero, of course.

P.S. I should note that, as public domain works, Heidi and Johanna Spyri's other books are available for free online too. You can put them on your Kindle as plain text files that work just great, instead of spending the $3 for digitally-locked DRM versions.

Labels: books, family, geekery, history, holiday, memories

24 November 2009

"The Origin" at 150

I wrote about it in much more detail back in February, but today is the actual 150th anniversary of Charles Darwin's On the Origin of Species, which was first published on November 24, 1859. That was more than two decades after Darwin first formulated his ideas about evolution by natural selection.

I wrote about it in much more detail back in February, but today is the actual 150th anniversary of Charles Darwin's On the Origin of Species, which was first published on November 24, 1859. That was more than two decades after Darwin first formulated his ideas about evolution by natural selection.

Some have called The Origin the most important book ever written, though of course many would dispute that. It's certainly up there on the list, and unequivocally on top for the field of biology. Darwin, along with others like Galileo, radically changed our perceptions about our place in the universe.

But Darwin was a scientist, not an inventor: he discovered natural selection, but did not create it. We honour him for being smart and tenacious, for being the first to figure out the basic mechanism that generated the history of life, and for writing eloquently and persuasively about it. His big idea was right (even if it took more than 70 years to confirm), but some of his conjectures and mechanisms turned out to be wrong.

He was also, from all accounts, an exceedingly nice man. Among towering intellects and important personalities, that's pretty unusual too.

Labels: anniversary, books, controversy, evolution, science

28 October 2009

Evolution book review: Dawkins's "Greatest Show on Earth," Coyne's "Why Evolution is True," and Shubin's "Your Inner Fish"

Next month, it will be exactly 150 years since Charles Darwin published On the Origin of Species in 1859. This year also marked what would have been his 200th birthday. Unsurprisingly, there are a lot of new books and movies and TV shows and websites about Darwin and his most important book this year.

Next month, it will be exactly 150 years since Charles Darwin published On the Origin of Species in 1859. This year also marked what would have been his 200th birthday. Unsurprisingly, there are a lot of new books and movies and TV shows and websites about Darwin and his most important book this year.

Of the books, I've bought and read the three of the highest-profile ones: Neil Shubin's Your Inner Fish (actually published in 2008), Jerry Coyne's Why Evolution is True, and Richard Dawkins's The Greatest Show on Earth: The Evidence for Evolution. They're all written by working (or, in Dawkins's case, officially retired) evolutionary biologists, but are aimed at a general audience, and tell compelling stories of what we know about the history of life on Earth and our part in it. I also re-read my copy of the first edition of the Origin itself, as well as legendary biologist Ernst Mayr's 2002 What Evolution Is, a few months ago.

Why now?

Aside from the Darwin anniversaries, I wanted to read the three new books because a lot has changed in the study of evolution since I finished my own biology degree in 1990. Or, I should say, not much has changed, but we sure know a lot more than we did even 20 years ago. As with any strong scientific idea, evidence continues accumulating to reinforce and refine it. When I graduated, for instance:

- DNA sequencing was rudimentary and horrifically expensive, and the idea of compiling data on an organism's entire genome was pretty much a fantasy. Now it's almost easy, and scientists are able to compare gene sequences to help determine (or confirm) how different groups of plants and animals are related to each other.

- Our understanding of our relationship with chimpanzees and our extinct mutual relatives (including Australopithecus, Paranthropus, Sahelanthropus, Orrorin, Ardipithecus, Kenyanthropus, and other species of Homo in Africa) was far less developed. With more fossils and more analysis, we know that our ancestors walked upright long before their brains got big, and that raises a host of new and interesting questions.

- The first satellite in the Global Positioning System had just been launched, so it was not yet easily possible to monitor continental drift and other evolution-influencing geological activities happening in real time (though of course it was well accepted from other evidence). Now, whether it's measuring how far the crust shifted during earthquakes or watching as San Francisco marches slowly northward, plate tectonics is as real as watching trees grow.

- Dr. Richard Lenski and his team had just begun what would become a decades-long study of bacteria, which eventually (and beautifully) showed the microorganisms evolving new biochemical pathways in a lab over tens of thousands of generations. That's substantial evolution occurring by natural selection, incontrovertibly, before our eyes.

- In Canada, the crash of Atlantic cod stocks and controversies over salmon farming in the Pacific hadn't yet happened, so the delicate balances of marine ecosystems weren't much in the public eye. Now we understand that human pressures can disrupt even apparently inexhaustible ocean resources, while impelling fish and their parasites to evolve new reproductive and growth strategies in response.

- Antibiotic resistance (where bacteria in the wild evolve ways to prevent drugs from being as effective as they used to) was on few people's intellectual radar, since it didn't start to become a serious problem in hospitals and other healthcare environments until the 1990s. As with cod, we humans have unwittingly created selection pressures on other organisms that work to our own detriment.

...and so on. Perhaps most shocking in hindsight, back in 1990 religious fundamentalism of all stripes seemed to be on the wane in many places around the world. By association, creationism and similar world views that ignore or deny that biological evolution even happens seemed less and less important.

Or maybe it just looked that way to me as I stepped out of the halls of UBC's biology buildings. After all, whether studying behavioural ecology, human medicine, cell physiology, or agriculture, no one there could get anything substantial done without knowledge of evolution and natural selection as the foundations of everything else.

Why these books?

The books by Shubin, Coyne, and Dawkins are not only welcome and useful in 2009, they are necessary. Because unlike in other scientific fields—where even people who don't really understand the nature of electrons or fluid dynamics or organic chemistry still accept that electrical appliances work when you turn them on, still fly in planes and ride ferryboats, and still take synthesized medicines to treat diseases or relieve pain—there are many, many people who don't think evolution is true.

No physicians must write books reiterating that, yes, bacteria and viruses are what spread infectious diseases. No physicists have to re-establish to the public that, honestly, electromagnetism is real. No psychiatrists are compelled to prove that, indeed, chemicals interacting with our brain tissues can alter our senses and emotions. No meteorologists need argue that, really, weather patterns are driven by energy from the Sun. Those things seem obvious and established now. We can move on.

But biologists continue to encounter resistance to the idea that differences in how living organisms survive and reproduce are enough to build all of life's complexity—over hundreds of millions of years, without any pre-existing plan or coordinating intelligence. But that's what happened, and we know it as well as we know anything.

If the Bible or the Qu'ran is your only book, I doubt much will change your mind on that. But many of the rest of those who don't accept evolution by natural selection, or who are simply unsure of it, may have been taught poorly about it back in school—or if not, they might have forgotten the elegant simplicity of the concept. Not to mention the huge truckloads of evidence to support evolutionary theory, which is as overwhelming (if not more so) and more immediate than the also-substantial evidence for our theories about gravity, weather forecasting, germs and disease, quantum mechanics, cognitive psychology, or macroeconomics.

Enjoying the human story

So, if these three biologists have taken on the task of explaining why we know evolution happened, and why natural selection is the best mechanism to explain it, how well do they do the job? Very well, but also differently. The titles tell you.

Shubin's Your Inner Fish is the shortest, the most personal, and the most fun. Dawkins's The Greatest Show on Earth is, well, the showiest, the biggest, and the most wide-ranging. And Coyne's Why Evolution is True is the most straightforward and cohesive argument for evolutionary biology as a whole—if you're going to read just one, it's your best choice.

However, of the three, I think I enjoyed Your Inner Fish the most. Author Neil Shubin was one of the lead researchers in the discovery and analysis of Tiktaalik, a fossil "fishapod" found on Ellesmere Island here in Canada in 2004. It is yet another demonstration of the predictive power of evolutionary theory: knowing that there were lobe-finned fossil fish about 380 million years ago, and obviously four-legged land dwelling amphibian-like vertebrates 15 million years later, Shubin and his colleagues proposed that an intermediate form or forms might exist in rocks of intermediate age.

Ellesmere Island is a long way from most places, but it has surface rocks about 375 million years old, so Shubin and his crew spent a bunch of money to travel there. And sure enough, there they found the fossil of Tiktaalik, with its wrists, neck, and lungs like a land animal, and gills and scales like a fish. (Yes, it had both lungs and gills.) Shubin uses that discovery to take a voyage through the history of vertebrate anatomy, showing how gill slits from fish evolved over tens of millions of years into the tiny bones in our inner ear that let us hear and keep us balanced.

Since we're interested in ourselves, he maintains a focus on how our bodies relate to those of our ancestors, including tracing the evolution of our teeth and sense of smell, even the whole plan of our bodies. He discusses why the way sharks were built hundreds of millions of years ago led to human males getting certain types of hernias today. And he explains why, as a fish paleontologist, he was surprisingly qualified to teach an introductory human anatomy dissection course to a bunch of medical students—because knowing about our "inner fish" tells us a lot about why our bodies are this way.

Telling a bigger tale

Richard Dawkins and Jerry Coyne tell much bigger stories. Where Shubin's book is about how we people are related to other creatures past and present, the other two seek to explain how all living things on Earth relate to each other, to describe the mechanism of how they came to the relationships they have now, and, more pointedly, to refute the claims of people who don't think those first two points are true.

Dawkins best expresses the frustration of scientists with evolution-deniers and their inevitable religious motivations, as you would expect from the world's foremost atheist. He begins The Greatest Show on Earth with a comparison. Imagine, he writes, you were a professor of history specializing in the Roman Empire, but you had to spend a good chunk of your time battling the claims of people who said ancient Rome and the Romans didn't even exist. This despite those pesky giant ruins modern Romans have had to build their roads around, and languages such as Italian, Spanish, Portuguese, French, German, and English that are obviously derived from Latin, not to mention the libraries and museums and countrysides full of further evidence.

He also explains, better than anyone I've ever read, why various ways of determining the ages of very old things work. If you've ever wondered how we know when a fossil is 65 million years old, or 500 million years old, or how carbon dating works, or how amazingly well different dating methods (tree ring information, radioactive decay products, sedimentary rock layers) agree with one another, read his chapter 4 and you'll get it.

Alas, while there's a lot of wonderful information in The Greatest Show on Earth, and many fascinating photos and diagrams, Dawkins could have used some stronger editing. The overall volume comes across as scattershot, assembled more like a good essay collection than a well-planned argument. Dawkins often takes needlessly long asides into interesting but peripheral topics, and his tone wanders.

Sometimes his writing cuts precisely, like a scalpel; other times, his breezy footnotes suggest a doddering old Oxford prof (well, that is where he's been teaching for decades!) telling tales of the old days in black school robes. I often found myself thinking, Okay, okay, now let's get on with it.

Truth to be found

On the other hand, Jerry Coyne strikes the right balance and uses the right structure. On his blog and in public appearances, Coyne is (like Dawkins) a staunch opponent of religion's influence on public policy and education, and of those who treat religion as immune to strong criticism. But that position hardly appears in Why Evolution is True at all, because Coyne wisely thinks it has no reason to. The evidence for evolution by natural selection stands on its own.

I wish Dawkins had done what Coyne does—noting what the six basic claims of current evolutionary theory are, and describing why real-world evidence overwhelmingly shows them all to be true. Here they are:

- Evolution: species of organisms change, and have changed, over time.

- Gradualism: those changes generally happen slowly, taking at least tens of thousands of years.

- Speciation: populations of organisms not only change, but split into new species from time to time.

- Common ancestry: all living things have a single common ancestor—we are all related.

- Natural selection: evolution is driven by random variations in organisms that are then filtered non-randomly by how well they reproduce.

- Non-selective mechanisms: natural selection isn't the only way organisms evolve, but it is the most important.

The rest of Coyne's book, in essence, fleshes those claims and the evidence out. That's almost it, and that's all it needs to be. He recounts too why, while Charles Darwin got all six of them essentially right back in 1859, only the first three or four were generally accepted (even by scientists) right away. It took the better part of a century for it to be obvious that he was correct about natural selection too, and even more time to establish our shared common ancestry with all plants, animals, and microorganisms.

Better than other books about evolution I've read, Why Evolution is True reveals the relentless series of tests that Darwinism has been subjected to, and survived, as new discoveries were made in astronomy, geology, physics, physiology, chemistry, and other fields of science. Coyne keeps pointing out that it didn't have to be that way. Darwin was wrong about quite a few things, but he could have been wrong about many more, and many more important ones.

If inheritance didn't turn out to be genetic, or further fossil finds showed an uncoordinated mix of forms over time (modern mammals and trilobites together, for instance), or no mechanism like plate tectonics explained fossil distributions, or various methods of dating disagreed profoundly, or there were no imperfections in organisms to betray their history—well, evolutionary biology could have hit any number of crisis points. But it didn't.

Darwin knew nothing about some of these lines of evidence, but they support his ideas anyway. We have many more new questions now too, but they rest on the fact of evolution, largely the way Darwin figured out that it works.

The questions and the truth

Facts, like life, survive the onslaughts of time. Opponents of evolution by natural selection have always pointed to gaps in our understanding, to the new questions that keep arising, as "flaws." But they are no such thing: gaps in our knowledge tell us where to look next. Conversely, saying that a god or gods, some supernatural agent, must have made life—because we don't yet know exactly how it happened naturally in every detail—is a way of giving up. It says not only that there are things we don't know, but things we can never learn.

Some of us who see the facts of evolution and natural selection, much the way Darwin first described them, prefer not to believe things, but instead to accept them because of supporting scientific evidence. But I do believe something: that the universe is coherent and comprehensible, and that trying to learn more about it is worth doing for its own sake.

In the 150 years since the Origin, people who believed that—who did not want to give up—have been the ones who helped us learn who we, and the other organisms who share our planet, really are. Thousands of researchers across the globe help us learn that, including Dawkins exploring how genes, and ideas, propagate themselves; Coyne peering at Hawaiian fruit flies through microscopes to see how they differ over generations; and Shubin trekking to the Canadian Arctic on the educated guess that a fishapod fossil might lie there.

The writing of all three authors pulses with that kind of enthusiasm—the urge to learn the truth about life on Earth, over more than 3 billion years of its history. We can admit that we will always be somewhat ignorant, and will probably never know everything. Yet we can delight in knowing there will always be more to learn. Such delight, and the fruits of the search so far, are what make these books all good to read during this important anniversary year.

Labels: anniversary, books, controversy, evolution, religion, review, science

14 September 2009

Book Review: Say Everything

It's a bit weird reading Say Everything, Scott Rosenberg's book about the history of blogging. I've read lots of tech books, but this one involves many people I know, directly or indirectly, and an industry I've been part of since its relatively early days. I've corresponded with many of the book's characters, linked back and forth with them, even met a few in person from time to time. And I directly experienced and participated in many of the changes Rosenberg writes about.

It's a bit weird reading Say Everything, Scott Rosenberg's book about the history of blogging. I've read lots of tech books, but this one involves many people I know, directly or indirectly, and an industry I've been part of since its relatively early days. I've corresponded with many of the book's characters, linked back and forth with them, even met a few in person from time to time. And I directly experienced and participated in many of the changes Rosenberg writes about.

The history the book tells, mostly in the first couple of hundred pages, feels right. He doesn't try to find The First Blogger, but he outlines how the threads came together to create the first blogs, and where things went after that. Then Rosenberg turns to analysis and commentary, which is also good. I never found myself thinking, Hey, that's not right! or You forgot the most important part!—and according to Rosenberg, that was the feeling about mainstream reporting that got people like Dave Winer blogging to begin with.

Rosenberg's last book came out only last year, in 2008, so much of what's in Say Everything is remarkably current. He covers why blogging is likely to survive newer phenomena like Facebook and Twitter. And he doesn't hold back in his scorn for the largely old-fashioned thinking of his former newspaper colleagues (he used to work at the San Francisco Examiner before helping found Salon).

But then I hit page 317, where he writes:

...bloggers attend to philosophical discourse as well as pop-cultural ephemera; they document private traumas as well as public controversies. They have sought faith and spurned it, chronicled awful illnesses and mourned unimaginable losses. [My emphasis - D.]

That caused a bit of a pang. After all, that's what I've been doing here for the past few years. It hit close to home. Next, page 357:

For some wide population of bloggers, there is ample reason to keep writing about a troubled marriage or a cancer diagnosis or a death in the family, regardless of how many ethical dilemmas must be traversed, or how trivial or amateurish their labours are judged. [Again, my emphasis - D.]

Okay, sure, there are lots of cancer bloggers out there. I'm just projecting my own experience onto Rosenberg's writing, right? Except, several hundred pages earlier, Rosenberg had written about an infamous blogger dustup between Jason Calacanis and Dave Winer at the Gnomedex 2007 conference in Seattle.

The same conference where, via video link, I gave a presentation, about which Rosenberg wrote on his blog:

Derek K. Miller is a longtime Canadian blogger [who'd] been slated to give a talk at Gnomedex, but he’s still recovering from an operation, so making the trip to Seattle wasn’t in the cards. Instead, he spoke to the conference from his bed via a video link, and talked about what it’s been like to tell the story of his cancer experience in public and in real time. Despite the usual video-conferencing hiccups (a few stuttering images and such), it was an electrifying talk.

Later that month, he mentioned me in an article in the U.K.'s Guardian newspaper. When he refers to people blogging about a cancer diagnosis, he doesn't just mean people like me, he means me. Thus I don't think I can be objective about this book. I think it's a good one. I think it tells an honest and comprehensive story about where blogging came from and why it's important. Yet I'm too close to the story—even if not by name, I'm in the story—to evaluate it dispassionately.

Then again, as Rosenberg writes, one of blogging's strengths is in not being objective. In declaring your interests and conflicts and forging ahead with your opinion and analysis anyway, and interacting online with other people who have other opinions.

So, then: Say Everything is a good book. You should read it—after all, not only does it talk about a lot of people I know, I'm in it too!

Labels: blog, books, cancer, gnomedex, history, review, web

07 May 2009

The Kindle DX is getting there

I have to say, Amazon's newly-announced Kindle DX, with a much larger screen than the original Kindle, is looking pretty good as an e-book reader. It's bigger, has more storage, and reads PDFs natively without conversion.

I have to say, Amazon's newly-announced Kindle DX, with a much larger screen than the original Kindle, is looking pretty good as an e-book reader. It's bigger, has more storage, and reads PDFs natively without conversion.

Too bad that, like its predecessor, it's unavailable in Canada (or anywhere outside the U.S.). It remains a bit of a polarizing device, but Amazon obviously believes this is where things are going. They're one of the only companies that might make it happen—if they're right about e-books being the future.

The DX is pricey, at almost $500 USD, and its e-ink technology is still a bit immature. However, maybe in a few years we'll be reading those Stanley Kubrick–style book pads after all, only a decade or so late.

05 February 2009

The big gap

For the past couple of weeks I've been reading God's Crucible

For the past couple of weeks I've been reading God's Crucible, a history book by David Levering Lewis—which I gave to my dad for Christmas, but which he's loaned back to me. Today I got a lot of reading done, because my body was screwing with me: I'll have to skip my cancer medications for the next day or two because the intestinal side effects, unusually, lasted all day today and into the night, and I have to give my digestive system (and my butt) a rest.

Lewis's book covers, at its core, a 650-year span from the 500s to the 1200s, during which Islam began, and rapidly spread the influence of its caliphate from Arabia across a big swath of Eurasia and northern Africa, while what had been the Roman Empire simultaneously fragmented into its Middle Ages in Europe. That's a time I've known little about until now.

The history I took in school and at university covered lots of things, from ancient Egypt and Babylon up to the Roman Empire, and from the Renaissance to the 20th century, as well as medieval England. I learned something about the history of China and India, as well as about the pre-Columbian Americas. But pre-Renaissance Europe and the simultaneous rapid expansion of the dar al-Islam are a particularly big gap for me.

Whose Dark Ages?

I knew a little about Moorish Spain, and a tad about Charlemagne and Constantinople, but otherwise it was just the Dark Ages for me, and for a lot of students who learned the typical Eurocentric view of history. There was much more going on in more populated and interesting parts of the world, though. While Europeans (not even yet named that) eked along with essentially no currency, trade, or much cultural exchange beyond warfare with horses, swords, and arrows:

- China went through the Sui, Tang, and Song dynasties, reaching the peak of classical Chinese art, culture, and technology.

- India also saw several dynasties and empires, as well as its own Muslim sultans.

- Large kingdoms of the Sahel in near-equatorial Africa arose, including those of Ghana, Takrur, Malinke, and Songhai.

- The Maya in Central America developed city-states and the only detailed written language in the Western Hemisphere.

- The seafaring Polynesians reached their most distant Pacific outposts, including Easter Island, Hawaii, and New Zealand.

Crucially important, of course, was Islam. Muhammad was born in the year 570, and shortly after he died about six decades later, Muslim armies exploded out of Arabia and conquered much of the Middle East and North Africa, then expanded east and north into Central Asia. By the early 700s, the influence of the caliphate headquartered in Damascus had reached Spain (al-Andalus), where Muslims would remain in power for 700 years.

In his book, Lewis flies through the origins of Islam, the foundations of the Byzantine and Roman branches of the Catholic Church after the fall of Rome, the interminable conflict between it and Zoroastrian Persia to the east, the Muslim caliphate's sudden rise up the middle to take over the eastern and southern Mediterranean, and the crossing of Islamic armies into Europe at Jebel Tariq (now called Gibraltar) in 711. Then things bog down a little.

I'm about half-way through the book, and Lewis throws out so many names, alternate names for the same people, events, places, and such that it's hard to keep up. He hops back and forth in time enough that what story he's trying to tell isn't always clear—he was won the Pulitzer Prize, but that was for more focused historical biographies, such as of W.E.B. Du Bois, rather than sweeping surveys like this one. His writing isn't overly complex, and I'm learning a lot, but it's a tough go sometimes. Still, I think it will be worth sticking it out.

Labels: books, europe, history, religion, review

27 June 2008

An author chimes in

Steve Ettlinger, author of Twinkie, Deconstructed, which I wrote about recently, left a comment saying that my blog post was his favorite review of the book.

In part that must be because I liked it, but it also seems that most other reviewers missed the winking irony in his use of Registered Trademark Symbols® throughout, which reminded me of Douglas Coupland's early-'90s novel Shampoo Planet. In that previous case the brands were made up, but the effect is similar: as a reader you feel a bit uncomfortable being hyper-aware of them.

I like that Ettlinger is keeping track of online reviews, in addition to those in traditional publications and media.

Labels: books, food, linkbait, writing

09 June 2008

Book review: "Twinkie, Deconstructed," deconstructed

Anyone who's ever eaten a Twinkie remembers the experience, even if it's been years. The textured, firm, sweet dough combined with the intense vanilla creme (not cream, mind you) filling is distinctive and, especially when you're a kid, delicious, yet obviously somehow sinful and wrong and unnatural at the same time.

Anyone who's ever eaten a Twinkie remembers the experience, even if it's been years. The textured, firm, sweet dough combined with the intense vanilla creme (not cream, mind you) filling is distinctive and, especially when you're a kid, delicious, yet obviously somehow sinful and wrong and unnatural at the same time.

While I was in hospital last week, my wife brought me Steve Ettlinger's book Twinkie, Deconstructed (buy using my affiliate code at Amazon Canada or U.S.A.). It's a perfect "Derek's sick" book: a sort of "science lite" non-fiction tome that's fascinating, informative, and non-polemical while still making a political point. I finished it in a little over a day.

The concept is brilliant. Prompted by a question from one of his kids, Ettlinger, a long-time science and consumer products writer, tells a story of traveling around the world to find out where each of the dozens of ingredients in a Hostess Twinkie comes from—in the order in which they're listed on the package. In doing so, he visits a lot more factories than farms, and encounters many more industrial centrifuges than ploughs.

Some reviewers think that Ettlinger got co-opted into the "Twinkie-Industrial Complex" (as he calls it) during the writing of the book. They think that he is too accepting, too uncritical, and indeed too friendly to the various large corporate interests who show him (or, in many cases, refuse to show him) around their facilities and processes. But I think he's smarter and more subversive than that.

Here's something from page 195:

In an undisclosed location, perhaps in an industrial park near Chicago, maybe in rural, central Pennsylvania, possibly in riparian Delaware, in a plant full of tanks, railroad sidings, and a maze of pipes and catwalks, big, stainless steel vats are filled with fresh, hot, luscious, liquefied sorbitan monostearate.

Or check out this label-text Kremlinology from page 255:

...while it seems that not one natural color is use in Twinkies, sometime the label has said "color added," which would make me suspect that annato, the butter and cheese colorant that is popular with [Hostess's] competitors, is indeed in the mix. But their punctuation indicates otherwise. "Color added" is followed by "(yellow 5 red 40)" which would seem to indicate grammatically that they are the only colors involved.

One of the most obvious stylistic effects throughout the book is that whenever Ettlinger first mentions a trademarked product, he adds the registered trademark symbol: Yoo-hoo® Chocolate Drink, PAM® cooking spray, Clabber Girl®, Davis®, and Calumet® baking soda, and so on. Normally you'd only see things written that way in a press release or corporate brochure.

You might think he was simply pressured by company lawyers, but when I read the book every trademark symbol seemed to me like a wink from the author, an unavoidable reminder that while he's breezing along in his personal, gee-whiz style, he hasn't forgotten that the process of Twinkie-making is huge and industrial, one that has only a little to do with baking and nourishment, and a lot with multinational chemical firms and drill rigs and mines and massive tract farms.

Twinkie, Deconstructed is no Silent Spring, or even Super Size Me. It's neither a manifesto nor a satire. It's not horrified at what Twinkies are made of—because ingredients originating from petroleum or minerals rather than food plants or animals is part of the Twinkie legend. What's surprising is only how far some of those ingredients have to travel, and how extensively they have to be mangled, reprocessed, ground, dissolved, flung, and dried before they get used in even minute quantities to bake those little cakes.

Ettlinger's book is, I think, more effective because he doesn't politicize it overtly. He simply tells us, repeatedly and relentlessly, about conveyor belts, pipes, pressure vessels, railroad cars, noxious chemical reactions, huge stainless steel tanks, monstrous earth-moving equipment, and what obviously must be enormous quantities of energy used in all those processes. He talks just as blithely about factories that refuse to tell him where their ingredients come from at all as he does friendly chemical engineers who show him around less secretive facilities. You can draw your own conclusions.

I did find myself wishing, at the end, that he had calculated how much energy a single Twinkie consumes in its manufacture—how much oil or coal or gas, or how many kilowatt-hours of electricity, it takes to bring all those ingredients together. And I was surprised that, after nearly 300 pages of background, Ettlinger never actually describes step-by-step how a Twinkie is made at the Hostess bakery.

But Twinkie, Deconstructed is a fun read. Whether you feel safe eating a Twinkie afterwards is a message you can safely infer from the book, rather than having to be clubbed over the head with it.

Labels: books, food, linkbait, writing

15 May 2008

Other stuff

I took in my old broken MacBook power adapter yesterday, and unfortunately Apple requires that the dealer keep it while they wait for the replacement. That means I temporarily have no way to charge my laptop, so I have to ration its time for when I really need it.

I took in my old broken MacBook power adapter yesterday, and unfortunately Apple requires that the dealer keep it while they wait for the replacement. That means I temporarily have no way to charge my laptop, so I have to ration its time for when I really need it.

So I find myself spending much less time randomly surfing around. Instead, I've been following up my recent finishing of Walter Isaacson's Einstein with James Gleick's Genius, a biography of perhaps the 20th century's second-greatest physicist, Richard Feynman. Plus I finally got my Griffin iMate USB-ADB adapter and hooked up one of my Apple Extended Keyboard IIs, as well as the accompanying Apple Desktop Bus Mouse II.

I'd forgotten that even the old ADB Mouse II, despite its physical mouse ball instead of a modern LED or laser, feels very nice too. The shape of the mouse feels better in my hand than Apple's new Mighty Mouse and most third-party mice, the plastic is solid, and the single-button mouse click is great. While I miss the right-click and scroll ball, I may even keep using the old ADB mouse. I'll certainly keep going with the great old keyboard.

Labels: apple, books, geekery, repairs, usb

18 March 2008

Farewell to Sir Arthur

While I have to admit that his fiction was sometimes a bit wooden, Sir Arthur C. Clarke, who died today, was one of the true visionaries of the last century. Geostationary telecommunications satellites—the ones we all use now for all sorts of things—were his idea. He helped with the deployment of radar in World War II. And in novels like Rendezvous With Rama and Childhood's End, he imagined how humans might react if we find out we aren't alone in the universe.

In many ways, he helped build the frame around our modern ideas about astronomy, cosmology, and space travel. He was no doubt disappointed that we didn't pursue the kind of space program started in the 1950s and '60s. We're certainly far from the routine orbital and lunar trips he and Stanley Kubrick forecasted in 2001.

He was, in many ways, an eccentric, married only once, briefly, decades ago, and spending the last 50 years of his life in Sri Lanka after leaving his native Britain. He was also a master of pithy quotes: "Any sufficiently advanced technology is indistinguishable from magic." "If there are any gods whose chief concern is man, they cannot be very important gods." "Any teacher that can be replaced by a machine should be!" "There is hopeful symbolism in the fact that flags do not wave in a vacuum." "I don't believe in astrology; I'm a Sagittarian and we're sceptical."

I read a lot of his stuff when I was young, and he is one reason I turned into a geek and a science major. He lived a long life, to 90, but it's still a sad day that he's gone.

Labels: age, arthurcclarke, astronomy, books, death, science, sciencefiction

12 December 2007

Book Review: Why Beautiful People Have More Daughters

When I read Why Beautiful People Have More Daughters (buy at Amazon Canada

When I read Why Beautiful People Have More Daughters (buy at Amazon Canada or Amazon U.S.

), I wasn't angry, but I was uncomfortable—and not because one of the authors of this brand-new volume has been dead for almost five years. The book is a summary of the new field of evolutionary psychology, which shows that our evolutionary past strongly influences how humans think and behave today.

UPDATE March 2008: It looks like the main author if this book, Satoshi Kanazawa, is a bit of a wingnut, and also may not be analyzing many of his statistics correctly. I stand by my review of the book here, but my reservations listed in it (especially that there is very little information about what many of the mechanisms of evolutionary psychology are) have become stronger with new evidence. Overall, I'm likely to look at his work more skeptically from now on.

You can see why that might be discomfiting: most of us like to think that we're independent actors, making decisions based on thought, and maybe influenced by our upbringing and our environment. Sometimes we are. But Alan S. Miller (the dead one, who got the project started) and Satoshi Kanazawa (the living one, who finished it) show how often we're not. If you're a creationist or think that all evil derives from patriarchal traditions and corporate media, this book will bug the hell out of you.

Publisher Peguin sent me the book to review at the suggestion of Darren Barefoot. Although my biology degree is a couple of decades old now, I find nothing in the fundamental premises of evolutionary psychology shocking. It only makes sense that, like those of all other animals (more so, since we depend so much on it), our human brain has evolved along with the rest of our body, adapting through natural selection to our environment.

Or, as Miller and Kanazawa point out, to what used to be our environment. We behave, make decisions, and organize ourselves the way we do today largely because it helped our ancestors survive and reproduce in Africa tens of thousands of years ago.

That's where things get interesting, and where the discomfort and controversy arise. Take this, from page 95:

Of course, diamonds and flowers are beautiful, but they are beautiful precisely because they are expensive and lack intrinsic value, which is why it is mostly women who think that diamonds and flowers are beautiful. Their beauty lies in their inherent uselessness; this is why Volvos and potatoes are not beautiful.

A major foundation of evolutionary psychology is that sex drives everything. Or, more accurately, that the differences between how men's and women's genes propagate to our descendants drives much of our behaviour, from the obvious (mating rituals) to the puzzling (wars, jobs, when we choose to travel, what we like to buy). We're just like dogs bred to be aggressive or good at herding sheep, or like birds and fish adapted to flocking and schooling, or predators that survive because natural selection molded their brains to know how to stalk and pounce and kill.

The result is many provocative statements about human beings:

- Divorced parents with children are playing a game of chicken, and it is usually the mother who swerves.

- Men do everything they do in order to get laid.

- Any reasonably attractive young woman exercises as much power as does the (male) ruler of the world.

- Clear evidence of women's promiscuity [...] is the size and shape of men's genitals and what men do with them.

- We believe in God for the same reason that men constantly think that women are coming on to them.

- It is the wife's age, not the husband's, that prompts [a man's] "midlife crisis."

- Religion is not an adaptation in itself but a byproduct of other adaptations. [...] The human brain [...] is biased to perceive intentional forces behind a wide range of natural physical phenomena [and thus] to see the hand of God at work.

- Humans are [...] born racist and ethnocentric, and learn through socialization and education not to act on such innate tendencies.

- Sometimes [a woman saying] "no" [to sex] really does mean "try a little harder."

Why Beautiful People Have More Daughters makes a reasonable case, with lots of reputable research backing it up, that much of the conventional wisdom of psychology and sociology is wrong. The authors and their evolutionary psychologist colleagues argue that many of the improper, cruel, unfair, and evil things (or, for that matter, altruistic, pleasant, equitable, and good things) that people do are not the result of childhood environments, cultural traditions, or power structures.

Rather, our behaviours today—whether our current ethics and morals judge them good or bad—are the same behaviours that helped our ancestors' genes propagate, and thus are the reason we're here now.

Men killing their wives, other men, and their stepchildren. Women wanting, and men liking, long lustrous blonde hair. Religiously motivated suicide bombers being almost exclusively young male Muslims. People of both sexes preferring blue eyes to brown. Women choosing older and more powerful men as mates, but more attractive men as lovers. Essentially all human societies permitting either polygyny (men with multiple wives or mistresses) or serial polygyny (men who marry, divorce, and remarry, usually to younger women). Young single women often traveling abroad to experience the world while their male cohorts tend to stay home and hate foreigners. All have explanations in evolutionary psychology, some more solid than others.

The writing in the book is sometimes a bit manic, as if the authors were yanking me as a reader from example to example, saying, "Look! Look! We're right again!" Some of their conclusions come with lots of convincing scientific evidence, not to mention theoretical predictions about human behaviour that turn out to be true. But others are apparently pure speculation. I also think many of their explanations would have been clearer using the past tense, rather than the present, to keep the role of our ancestral environment clear.

They do show that beautiful people tend to have more daughters, and why that makes sense, but the physiological mechanism of how it happens wasn't clear to me. And neither the authors nor their editors seem to know what "begs the question" is actually supposed to mean.

To be fair, Miller and Kanazawa take pains to note that many of the things we do make little sense in the modern world (meaning the fast-changing one we've been in for the past 10,000 years or so, since the invention of agriculture). But because those behaviours evolved over hundreds of thousands or millions of years before that, we can't help ourselves. And the authors also highlight some areas—homosexuality, declining birthrates in industrialized countries, the willingness to become a soldier—that their field can't explain very well.

We still love sweet and fatty foods, which were once rare and precious but are now overabundant and giving us health problems. Similarly, we behave in ways that begat us more children when living in small groups of hunter-gatherers in a sub-tropical savannah, but which may not be of similar benefit in a world of fast cars, 80-year lifespans, high explosives, supermarkets, birth control, jet travel, antibiotics, and Internet dating.

What made me uncomfortable about the book is that, as a bleeding-heart leftie, of course I want to believe that we are not so driven and constrained by our evolutionary history. But I'm also trained in biology and—even more after reading Miller and Kanazawa—it's clear to me that, like other animals, we must be.

But what we do is not always what we ought to do: Why Beautiful People Have More Daughters reinforces repeatedly that facts (what is) do not determine morals (what should be). As a parallel, knowing that fleas spread bubonic plague doesn't make the plague desirable, and knowing that is key to combating the disease. But, conversely, the way we think things should be isn't necessarily the way they are either. Wanting human nature to compel us to treat each other fairly and well doesn't make that true. We have to find different reasons to make it happen, to overcome much of what is innate in us.

That is yet another lesson of the modern world that my brain, prehistoric as it is, has trouble handling.

Labels: biology, books, controversy, evolution, naturalselection, psychology

25 October 2007

How to read the Bible

There's been a bit of a buzz recently about the book The Year of Living Biblically, where author A.J. Jacobs, an agnostic Jew, chose to live by all the rules in the Bible (even the obscure ones, and mostly from the Old Testament) as much as he could, for an entire year. The Bible is of course a fascinating book, even for atheists like me, for whom it is not a divine revelation but a magnificent human construction.

Like the Quran, the Hindu Vedas, Buddhist Sutras, the Analects of Kong Fuzi (Confucius), and the myths and stories of everyone from the Egyptians, the Greeks and Romans, and the Norse to the Inca, the Haida, and the vast diaspora of Polynesia, the Bible has profoundly affected, and been the foundation of, huge parts of human culture and history. I know less about it (and those other works) than I should.

I'm not sure Jacobs's book would be my best resource, though I'm sure it's funny and revealing—in a radio interview with Jacobs I heard this morning, I discovered that wearing clothes whose fibres mix wool and linen is apparently forbidden. Daily showers are, however, apparently fine, even though I've heard of some religious Christians and Jews who disdain "bathing for pleasure." And in the Bible (New Testament especially), there appears to be a whole lot more about helping the poor and needy than the behaviour of a lot of people who claim to be literalist Christians would indicate.

Perhaps a better introduction would be How to Read the Bible, by Richard Holloway, the controversial retired Anglican Bishop of Edinburgh. On the most recent CBC "Ideas" podcast, he and Paul Kennedy engage in a fascinating discussion (MP3, available on CD once that MP3 link expires) of his book.

Richard Dawkins, most prominent atheist, was raised Anglican, and he has called Anglicanism a watered-down, weakened strain of the virus he thinks religion is. Holloway surely does not disabuse Dawkins of that assessment, especially when he writes things like:

One of the constant themes of the Bible is the human capacity to get God wrong, which is why the distance between those who believe God is a human invention and those who believe God is real is narrower than we might think.

and:

The afterlife of great texts [like the Bible] eclipses the intention of the original author, even if we think we know what it was.

and:

Retrojecting ideas from a later perspective into the text of the Hebrew Bible became a major enterprise in Christianity, with two branches, historical and theological.

Those are just from the first chapter. I knew nothing about Holloway until I heard the podcast, but I suspect that a lot of believing people—especially those prone to Biblical literalism—no longer consider him a good Anglican, or a good Christian, regardless of his previous senior role in the church.

Now, by nature, I know pretty much nothing about the past 2000 years of Christian theology, just as I am largely ignorant of what intellectuals among Muslims, Hindus, Sikhs, Jews, Zoroastrians, Jains, Buddhists, and others have thought and written about their own faiths—or what modern academics outside those religions have to say about them. I'm not particularly interested in becoming a religious expert either, for even all religions put together are only a part of how humans try to understand and organize the world (not the part that most interests me).

But from what I have heard and read this week, I like Holloway's approach, and will probably read his book at some point, which I guess means I'll need to get to reading that Bible eventually too. From previous comments on this blog (and surely some more to come on this post), a small number of you readers might think doing so will lead to a born-again conversion in me, especially with my cancer and everything now, or that I've already consigned myself to Hell unless I repent pretty darn soon.

If I were you, I wouldn't bet any money on that.

Labels: bible, books, cbc, controversy, podcast, religion, science

24 October 2007

Links of interest (2007-10-23):

- Speaking of the whole Dumbledore thing, here's a funny list: "Gay marriage will encourage people to be gay, in the same way that hanging around tall people will make you tall."

- "What is it about the world of enterprise software that routinely produces such inelegant user experiences?" One explanation: "'Enterprise software' is software that has to be sold to an 'enterprise', where someone who doesn't use the software (typically a manager) must be persuaded to use his purchasing authority to buy the software."

- Secrets that airlines don't want you to know (nothing too earth-shattering).

- 25 awesome action heroes.

- "Power users are a minority, and while they point the way to the future, they tend to be disappointed when the rest of the market catches up with an inferior product that has a lower barrier to new users."

- Gmail is finally getting IMAP support. If POP-only support frustrated you until now, you'll find that useful.

- "If it happened under Bush, Iran-Contra wouldn't even make Page A-18."

Labels: books, design, gmail, government, linksofinterest, movie, travel

23 October 2007

Waaaay belated thank you

Back in June, I turned 38 and my wife threw me a big awesome party. I had a great time, but I was in a lot of pain at the time, and it was only a week before I went in for major surgery, spending most of July in hospital.

Back in June, I turned 38 and my wife threw me a big awesome party. I had a great time, but I was in a lot of pain at the time, and it was only a week before I went in for major surgery, spending most of July in hospital.

So I have a bit of an excuse for not taking careful note of every gift from every person—especially among the stack of gift cards and certificates that have been sitting next to my bed for the past four months. Today my wife and I walked to the mall, bringing along a couple of bookstore gift cards from that pile, and discovered that one of them was worth $150! And I have no idea who gave it to me (it wasn't labeled for purchaser or amount).

So I'll take this opportunity to say a long-belated thank you again to everyone who came to the party, and to everyone who gave me a gift, even if I don't know what you gave me.

After a solid week of pounding, soaking rain, it is also a spectacular, sunny, warm autumn day, up to 16°C this afternoon, and I'm back-porch blogging for perhaps the last time this year. I'm feeling good. Thanks for that too.

Labels: birthday, books, cancer, family, shopping